Enterprise LLM deployment: Considerations and strategies

- anand das

- Sep 7, 2024

- 2 min read

If you hold a position as a digital or data leader in an enterprise, it is likely that your business leadership is inquiring about utilizing GenAI to enhance efficiency or boost revenue. Companies are actively investigating potential applications and implementing their initial generative AI solutions in operational settings.

As the deployment of LLMs or LLMOps began in earnest, enterprises are struggling to determine the ideal deployment method to choose, given their data maturity and use-cases to be solved for.

The goal of this blog-post is to talk about the approaches available to enterprises and a cheat sheet to select the ideal approach for your use-cases. Bear in mind that large organisations look to use LLMs for a broad variety of use-cases across their value chain and they might have to adopt more than one approaches.

Deployment Considerations

As you begin with your use-case, you need to keep a few considerations in mind. By addressing these considerations, enterprises can better prepare for the deployment of LLMs, ensuring that they achieve their intended outcomes while minimizing risks and costs

is it a pilot or a scalable deployment ? Model deployment choices changes vary dramatically based on this decision. If you are looking for a scalable deployment, factors like open-source models, model fine-tuning, cost mgmt, privacy, hosting infra, monitoring become very important. However, if you are looking to pilot, quick GTM with a close-sourced model is typically the way to go.

whats your long term strategy ? related to above point, is this use-case going to give you a strategic edge and do you need to protect it as your intellectual property ?

do you have a in-house data science or tech team ? Ease of deployment is a important consideration if you don't have an in-house tech team who can do tooling and monitoring

what is the Operating cost ? Running cost is a very critical consideration which is generally over-looked by enterprises. If you are looking to use LLM for a use-case that will have to handle high concurrency, model latency and per unit cost mgmt needs to be handled well. Close-sourced LLM models pricing is expensive and it becomes difficult to manage costs.

Quality of output expected Most LLMs have become reasonably good for generalized use-cases but they struggle with domain specific use-cases. Enterprises need to make investments in model fine-tuning or RAG to get effective outputs.

A cheat sheet to help you decide LLM deployment method

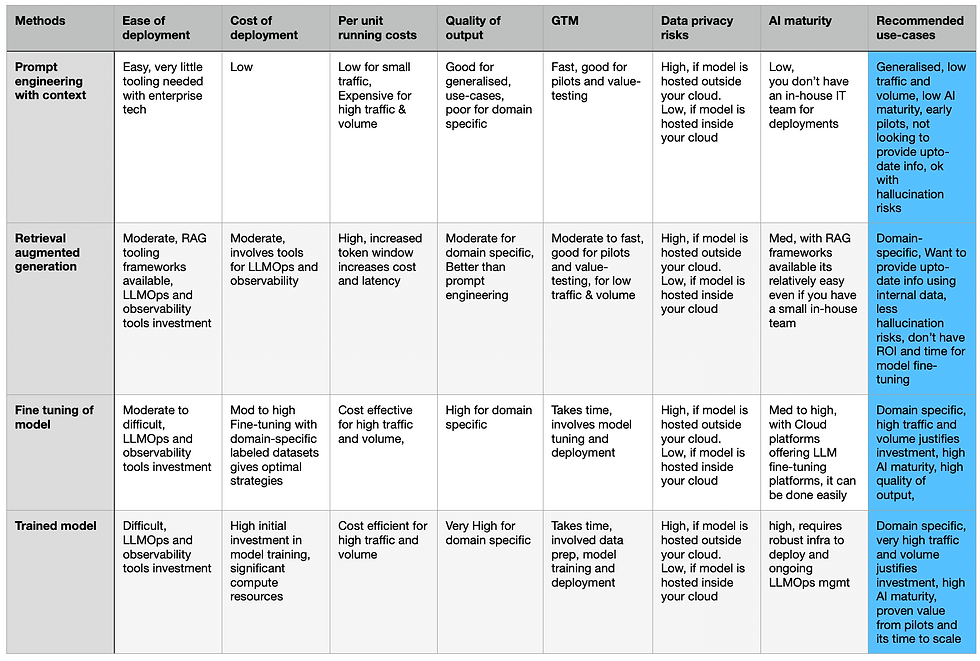

Keeping the above considerations in mind, most practitioners recommend four different approaches that enterprises need to take to jumpstart their LLM journey. These four approaches range from easy and cheap to difficult and expensive to deploy, and enterprises should make their choices depending on whats their response to the considerations stated above.

The cheat sheet below becomes gives you a easy to use summary comparison of the 4 methods and the last column provides overall summary.

Summary comparison of 4 deployment methods

Hopefully this blog gives you guidance so that you can make informed choices. Enterprise LLM deployment can become a difficult beast to manage if not planned well with thoughtful considerations.

In the next section of this blog, I will write about the most widely used reference architectures for scalable LLM deployment.

Comments